If you’ve Googled a baby sleep question this year, AI Overviews are now your default first answer – Google’s three-bullet summaries served up before any link loads. Scroll an inch, however, and the Featured Snippet directly underneath often says something different. The 3am version of this is its own kind of awful: same Google, two answers, no obvious way to pick.

Key Takeaways

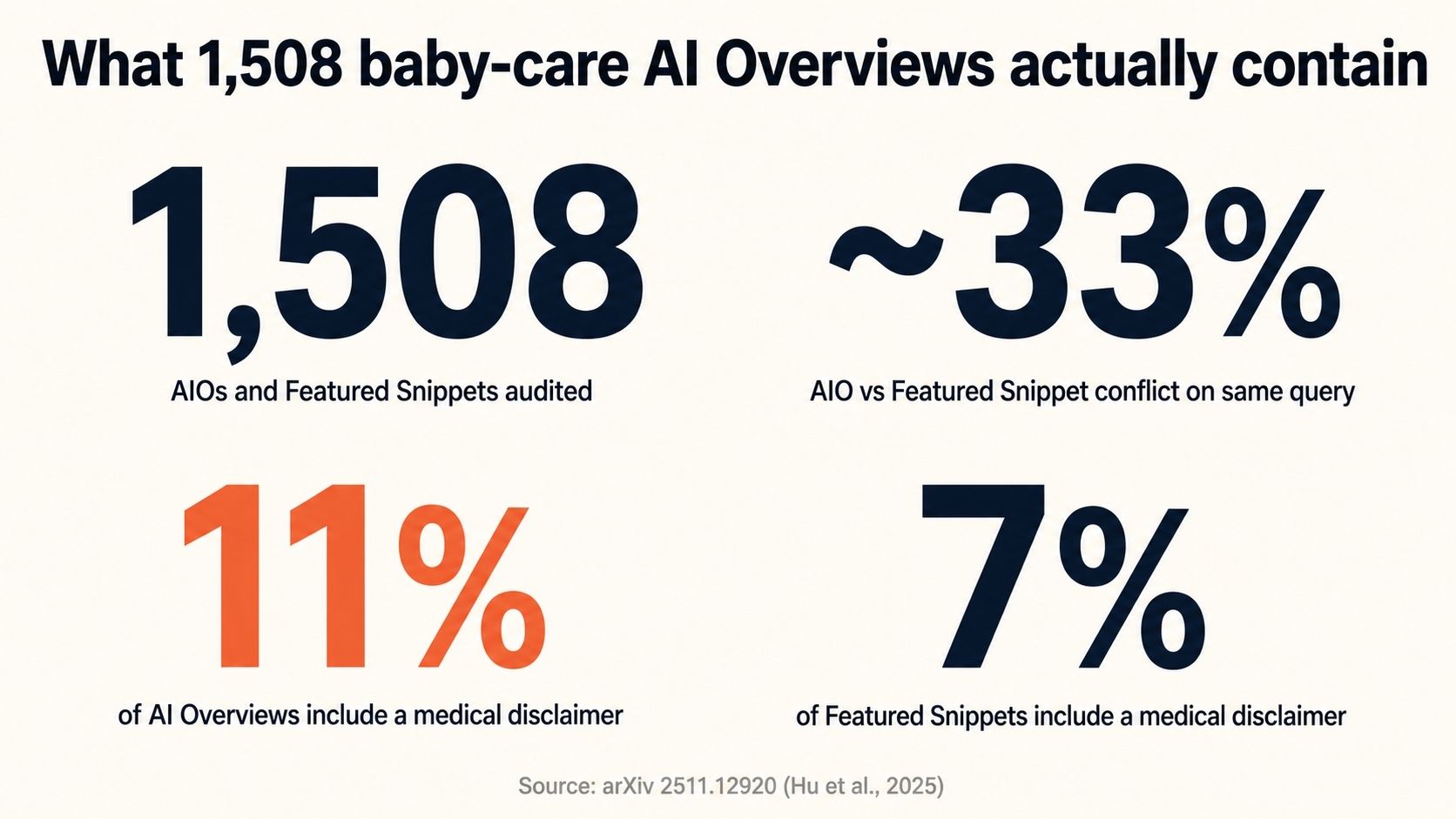

- A 2025 University of Zurich-led audit of 1,508 Google AI Overviews on baby and pregnancy care found only 11% included a medical disclaimer, and roughly 1 in 3 conflicted with the same query’s Featured Snippet.

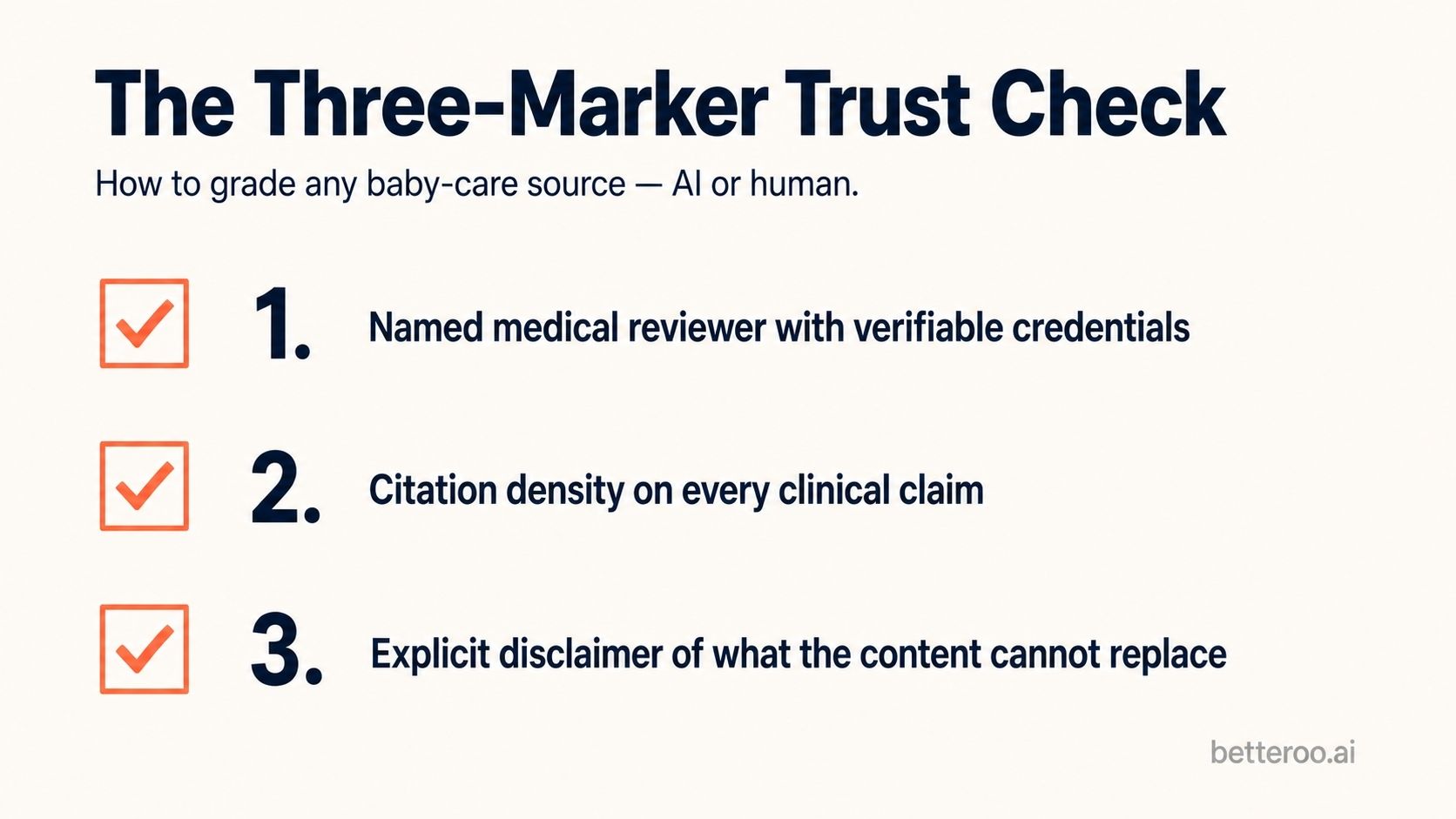

- Apply the Three-Marker Trust Check to any baby-content source: a named medical reviewer with credentials, citation density on every clinical claim, and an explicit disclaimer of what the content can’t replace.

- Parents can’t reliably distinguish AI-generated child-health content from content written by named experts. The detection problem is structural, not a literacy gap.

- When AI advice conflicts with your pediatrician’s, pediatrician wins for anything specific to your baby. Use AI for general informational questions, not for baby-specific decisions.

- Every Betteroo guide goes through medical review by our advisory board – the credential 89% of baby-care AI Overviews are missing.

“At 3am, parents don’t need a probability machine. They need someone who knows their baby.”

– Rachel Rothman, Chief Parenting Officer, Betteroo

AI Overviews now answer most baby health questions before any link loads. They sound certain. The Google branding makes them feel official.

On baby and pregnancy queries, though, they’re wrong often enough – and quietly enough – that most parents won’t notice until something doesn’t add up.

This is a guide for noticing earlier. We’ll give you a simple framework you can apply to any source. Try it on what’s normal for baby sleep first – that’s where most of the bad AI advice gets traction.

Table of Contents

The AI Overviews audit that should change how parents Google

In late 2025, a team led by researchers at the University of Zurich, with collaborators at Stanford and Northeastern, did something nobody had bothered to do at scale: they audited 1,508 Google AI Overviews and Featured Snippets on baby care and pregnancy questions.¹ The findings are the reason every parent should change how they read AI answers.

Three numbers from that paper matter most:

- About 1 in 3 AI Overviews gave information that conflicted with the Featured Snippet for the same query. Two pieces of Google-surfaced answer content, on the same screen, disagreeing about the same baby.

- Only 11% of the AI Overviews on baby and pregnancy care included a medical disclaimer.

- Only 7% of the Featured Snippets did.

Yet these were not edge cases. The audit covered the kinds of questions parents actually ask: feeding amounts, safe sleep, regression duration, when to call a doctor. And it’s the daily experience of anyone Googling baby health questions in 2026 – because Google has expanded AI Overviews into nearly every health-adjacent query in the year since these features launched.

| Audit dimension | Finding |

|---|---|

| AIOs and Featured Snippets audited | 1,508 across baby care and pregnancy queries |

| AIO vs Featured Snippet conflict rate (same query) | ~33% |

| AI Overviews containing a medical disclaimer | 11% |

| Featured Snippets containing a medical disclaimer | 7% |

After all, baby and pregnancy queries are the wrong category to be confidently wrong on. A bad sourdough recipe is a bad afternoon. A confidently-wrong safe-sleep answer is something else entirely – and it’s being served first, before any source the parent could vet.

The Three-Marker Trust Check

Parents need a quick way to grade any source – AI Overview, blog, app, or article – before deciding how much weight to give it. After looking at how the best baby health content is built, we landed on three markers that consistently separate the trustworthy from the noise. We call it the Three-Marker Trust Check.

“Three things separate trustworthy baby health content from confident-sounding noise: a person who put their name on it, a citation you can actually click, and a clear statement of what the content can’t tell you.”

– Dr. Meidad Greenberg, MD, Betteroo Medical Reviewer

Marker 1 – a named medical reviewer with verifiable credentials

What to look for: an actual person’s name attached to the medical content, with a credential you can verify (MD, IBCLC, RD-CLC, etc.). “Reviewed by our medical team” is not a credential. “Reviewed by Dr. Jane Smith, board-certified pediatrician” is.

Marker 2 – citation density on every clinical claim

What to look for: sources cited at the claim level, not just decorative facts. If a piece says “babies sleep better with consistent bedtimes,” you should be able to click through to a study or guideline that says exactly that. AI Overviews almost never cite at the claim level – they cite at the page level, which is too coarse to be auditable.

Marker 3 – an explicit disclaimer of what the content can’t replace

What to look for: a clear statement that the content is general guidance and does not replace your pediatrician’s advice for your specific baby. The audit found this disclaimer present on only 11% of AIOs and 7% of Featured Snippets. Its absence isn’t neutral – it’s a signal that the content is over-confident by design.

Apply this check to any source – including this one. (This article has a named medical reviewer at the top, claim-level citations throughout, and a disclaimer near the end. That’s the point.)

Why parents can’t tell AI Overviews from expert content

Even health-literate parents have a hard time telling AI-generated child-health content apart from content written by named clinicians. The reason isn’t that parents are gullible. AI is just genuinely good at sounding knowledgeable, and there’s no asterisk warning you when it isn’t.

“We hear this from parents constantly: ‘The AI sounded so sure.’ That’s the trap. Sounding sure is not the same as being right.”

– Rachel Rothman, Chief Parenting Officer

Two things compound the problem for baby health specifically:

- AI sounds most confident exactly where it shouldn’t. It’s a pattern-completion engine, trained on whatever the internet has said about a question and tuned to give the most popular-sounding answer. For a stable, well-evidenced topic, that works. For questions where the answer changes as your baby grows – like sleep regressions, where the same behavior at 4 months means something different at 8 – that confidence is misleading.

- Baby sleep questions often don’t have one right answer. They have a right answer for your baby. AI doesn’t know your baby. It knows what most internet content says about babies-in-aggregate.

As a result, the most common failure pattern we see goes like this. A parent searches “why is my baby waking at night” and gets a cleanly-written answer about the 4-month sleep regression. They conclude that’s the explanation. In fact, their 7-month-old might be having a feeding-related wake or a teething episode. Both look similar from the outside. Both require completely different responses. AI doesn’t ask the disambiguating question. A pediatrician would.

Tired of guessing whose baby sleep advice to trust?

Get a personalized sleep plan reviewed by an actual pediatrician. Three minutes of questions, a real plan tailored to your baby.

Take the 3-Min Quiz →What good looks like – applying the Three-Marker check

The framework is most useful when applied to the source you’re actually reading.

Take a typical AI Overview on a baby sleep question. It cites a few source pages, but no person reviewed the answer. Citations sit at the page level, so you can’t click through to the specific claim. And only 11% of baby-care AIOs include any kind of medical disclaimer.

That’s a 0 to 1 out of 3 on the Three-Marker check. Trustworthy enough for a definition. Not trustworthy enough to act on for your specific baby.

Now think about where AI Overviews pull their content from in the first place. The American Academy of Pediatrics. AASM consensus statements. Peer-reviewed sleep research. These foundational sources all pass the three-marker check easily – named experts, dense citations, explicit caveats. They’re the floor every other piece of baby health content builds on.

The job of consumer-facing parenting content isn’t to replace the AAP or the research literature. It’s to make those foundational sources usable for tired parents at 3am – keeping the rigor, adding the practical application. You can see what that looks like on our guide to common sleep training methods, where every method’s evidence base is cited and the medical review is named at the top.

What to do when AI Overviews conflict with your pediatrician

This is the most common version we hear from parents: the pediatrician said one thing at the four-month visit, and an AI Overview is now saying something different at 3am. The rule is simple: your pediatrician knows your baby. AI knows what the internet has said about babies in aggregate. When their advice conflicts, default to the pediatrician for anything specific to your baby’s body, history, or symptoms.²

“AI can describe what’s typical for babies in aggregate. It cannot examine your specific baby. When the two disagree, the one in the room with your child wins.”

– Dr. Meidad Greenberg, MD, Board-Certified Pediatrician

That said, there is a real space where AI-summarized answers are useful: generic informational questions where there’s broad clinical consensus and the answer doesn’t depend on your specific baby. The trick is knowing which kind of question you’re asking before you trust the answer. Use the matrix below – the underlying age-specific norms come from our hub on baby sleep schedule by age:

| Use AI / search summary for… | Call your pediatrician for… |

|---|---|

| General age-typical sleep ranges (e.g., “how much sleep at 6 months”) | Anything safe-sleep related (positioning, surface, products) |

| Definitions of common terms (regression, wake window, sleep cycle) | Suspected illness or red-flag symptoms during sleep |

| Comparing well-known sleep training methods | Persistent change in feeding or weight alongside sleep change |

| Reading what the AAP guideline says | Anything specific to YOUR baby’s history, prematurity, or medical conditions |

| Pre-appointment prep questions | Decisions about medications, supplements, or sleep aids |

The Three Questions Rule

Before acting on any AI answer, ask three questions. One: is this advice specific to my baby? Two: is it medical or just behavioral, and if medical, would I trust it without a name on it? Three: is the source actually named, or is the AI just citing “experts”? If you can’t answer all three confidently, treat the AI answer as a starting point, not a conclusion.

How Betteroo built for the trust-marker era

Most parenting content fails one of two ways. It’s clinically rigorous but emotionally cold – good for clinicians, exhausting at 3am. Or it’s warm but unsourced – easy to read, dangerous to act on.

Betteroo Guides are built to do both. Our content goes through medical review by our advisory board before it ships. Every clinical claim is cited. Every guide carries an explicit disclaimer. And every recommendation is grounded in our 32,000-parent State of Baby Sleep survey, the largest proprietary baby-sleep dataset we have.

More on how every guide is structured – and who reviews them – on the Betteroo team page.

Frequently asked questions

Are AI Overviews reliable for medical questions about my baby?

Not reliably enough to act on for baby-specific decisions. The 2025 University of Zurich-led audit of 1,508 baby-care AI Overviews found only 11% included a medical disclaimer and roughly 1 in 3 conflicted with the same query’s Featured Snippet. Use AI Overviews for general informational questions; don’t use them as a substitute for your pediatrician for anything specific to your baby.

Can ChatGPT or Google’s AI replace a pediatrician?

No. Your pediatrician knows your baby’s history, growth, and physical exam findings. AI is a pattern-completion engine – it can describe what’s typical for babies in aggregate, but it cannot evaluate what’s right for your specific baby. AI is most useful as a tool to prepare questions for an appointment, not as a substitute for it.

Why does AI give different answers to the same baby question?

Because AI generates the most likely text continuation given a prompt, and small prompt variations push it toward different answers. The 2025 audit documented this within the same Google session: AI Overview and Featured Snippet on the same query gave conflicting information on roughly 1 in 3 baby-care queries. The variability is a structural feature of the technology.

What is a medical disclaimer and why does it matter on baby content?

A medical disclaimer is an explicit statement that the content is general guidance and does not replace personalized advice from a qualified clinician. It matters because it sets the right expectation for the reader and signals editorial honesty. Its absence is a signal that the content is over-confident by design – which the 2025 audit documented in 89% of baby-care AI Overviews.

How do I know if a baby sleep website is evidence-based?

Apply the Three-Marker Trust Check. (1) Look for a named medical reviewer with verifiable credentials, ideally on every article. (2) Check whether clinical claims are tied to specific cited sources you can click through to. (3) Look for an explicit disclaimer about what the content cannot replace. If a site fails any of the three markers, downgrade how much weight you give it.

Should I follow ChatGPT’s baby sleep advice?

Treat it as a starting point, not a conclusion. ChatGPT and similar AI assistants don’t have a medical reviewer, don’t cite at the claim level, and don’t consistently include disclaimers. They’re useful for understanding general concepts (what a wake window is, what the AAP recommends) and not appropriate for decisions specific to your baby’s body or symptoms.

What is an AI hallucination, and does it happen in baby health content?

An AI hallucination is when an AI confidently states something that is factually wrong or fabricated. It happens in baby health content – the 2025 audit documented conflicting and inaccurate information across 1,508 baby-care AI Overviews and Featured Snippets. The risk is that the false information is delivered with the same confident tone as the true information, making detection difficult.

Key takeaway

Apply the Three-Marker Trust Check – named medical reviewer, claim-level citations, explicit disclaimer – to every source you read about your baby. If a source can’t pass all three, treat its advice as a starting point, not a conclusion.

Disclaimer: This article is general parenting guidance. It is not a substitute for medical advice from your child’s pediatrician, who knows your baby’s specific history. If your baby has unusual sleep patterns, persistent illness, or any red-flag symptoms, contact your pediatrician.

Stop guessing. Get a real plan.

Three minutes of questions. A pediatrician-reviewed sleep plan tailored to your baby – not the median baby a probability machine averages over.

Get Personalized Sleep Help →3 Sources

- Hu, D., Baumann, J., Urman, A., Lichtenegger, E., Forsberg, R., Hannak, A., & Wilson, C. (2025). Auditing Google’s AI Overviews and Featured Snippets: A Case Study on Baby Care and Pregnancy. arXiv:2511.12920. (University of Zurich, Stanford, Northeastern, Helsinki, Corvinus Budapest) https://arxiv.org/abs/2511.12920

- Pediatric Research (2026). Sleep and infant development in the first year. doi: 10.1038/s41390-026-04780-4. https://www.nature.com/articles/s41390-026-04780-4

- Moon, R.Y., Carlin, R.F., Hand, I., et al. (2022). Sleep-Related Infant Deaths: Updated 2022 Recommendations for Reducing Infant Deaths in the Sleep Environment. AAP Task Force on SIDS. Pediatrics, 150(1). https://pubmed.ncbi.nlm.nih.gov/35726558/